Member-only story

False Positives and False Negatives

Testing is not perfect.

Tests can identify who has the novel Coronavirus (COVID-19). The Department of Health and Social Care updates the number of UK confirmed cases.

Confirmed cases must have a positive test result. Testing is imperfect. This article illustrates how false test outcomes affect our interpretations.

Sensitivity

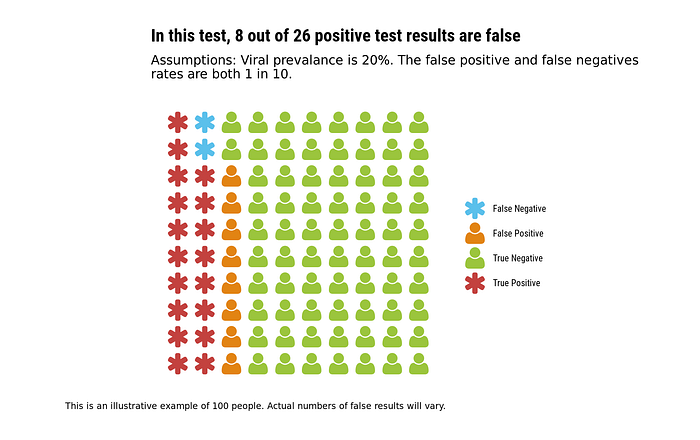

Imagine one in five people have a virus. We sample, and get a perfect slice of that population. Our sample of 100 people contains 20 patients with the virus. Scientists then conduct tests of our sample:

- False negative: for 1 in 10 people who have the virus, the test gives a wrong ‘negative’ result. For these people, they have the virus, but the test does not detect it.

- False positive: for 1 in 10 people who do not have the virus, the test gives an incorrect ‘positive’. For these people, they are not infected, but the test detects the virus.

In each example, I use average false rates. This simplicity is for illustration. In real-world batches, actual numbers of false results will vary.

Among an average 9 in 10 infected people, the test gives a true ‘positive’ result. This is because the false negative rate is 10%. Another name for the true positive rate is the test’s sensitivity.

Specificity

Different tests have different chances of false results.

In this example, 1 in 80 uninfected people get a false ‘positive’ result. Yet, 3 in 10 infected people receive an incorrect ‘negative’ result.

Whilst 20 people have the virus, there are only 15 positive results.

As the false positive rate is 1.25% (1 in 80), the true negative rate is 98.75%. This true negative rate is also called the test’s specificity.

This test has a high specificity, but low sensitivity. It is like fishing: there may be fish in the river, but you will not catch them every time.